A single poor quality deliverable can not only create an issue with the customer, but initiate an internal cycle of poor quality culture—rippling through teams, eroding trust, and setting a precedent that’s hard to shake.

That’s why project quality control isn’t just a checkbox; it’s a safeguard for reputation and morale alike.

Luckily, project management methodologies provide a framework for establishing project quality that builds the foundation to ensure your outputs meet expectations every time.

What is Quality Control?

Quality control is the process that businesses use to ensure that a product or service adheres to a predefined set of quality standards or meets the requirements of customers or clients.

In the Project Management Body of Knowledge (PMBOK), Control Quality falls under the delivery performance domain.

Quality Control vs. Quality Assurance

This distinction is commonly misunderstood. Quality Control is the measurement of outputs to determine whether they meet the accepted criteria. Quality Assurance, on the other hand, analyzes the processes and systems that are producing the outputs. If you’re measuring the systems, it’s QA. If you’re measuring the outputs, it’s QC.

Measuring Outputs

Quality criteria should have been identified during the planning phase for each project deliverable.

When project deliverables are produced, they are subjected to quality control before they are delivered to their end user. That means they need to be measured and compared against the standard that’s been identified in the project management plan. If you don’t know the quality of your outputs, you cannot make effective decisions.

Sometimes outputs are singular in nature, like a project report, which doesn’t lend itself well to quantitative measurement against a standard. But there are always quality standards that apply, for example when the reader of the report notices technical errors or excessive spelling mistakes it is clear that they are applying a quality standard to your work. Therefore, what is required to meet this standard? The project management plan should identify things like expert review, grammatical review, management review, and the like. When the review is done, quality control has been completed.

Examples of quality control include:

- Inspection of products leaving the production line.

- Expert technical review of reports.

- Trial runs prior to plant commissioning.

QC Forms

During the planning phase the project manager should develop appropriate forms for measuring the quality of the deliverables. This ensures that consideration has been given at the outset to measuring the right things and how they will be reported. When the inevitable issues arise and questions are asked, it is often invaluable to be able to produce a document that confirms the quality of the deliverables.

It is also essential to confirm that the deliverables have met the needs of the customer (or at least the internal perception of the customer’s needs).

Quality Metrics

The project management plan will identify what will be measured and the pass/fail criteria. In the case of a manufacturing facility for a new line of pens, you might measure whether they draw a straight line without problems, if they come apart after using them for 10 minutes, or if the ink dries up after leaving them open for 4 hours, and so on. You would choose to take a predefined number of pens off the assembly line for testing. The results would be graphed or otherwise presented to the interested parties.

Some basic knowledge of statistics is helpful. Determining the standard deviation, mean, median, or mode of a data set helps to visualize the impact and severity of the results. Many other statistical tools are available, and in fact the term quality control sometimes includes extensive statistical presentation of the results. Although the analysis of the results officially falls under quality assurance instead of quality control, there is a large overlap when alot of statistical analysis is done (are you presenting the data or analyzing it?).

Here is a list of possible quality metrics:

- Failure rate

- Defect frequency

- on-time performance

- on-budget performance

- Reliability

- Mean Time Between Failure (MTBF)

- Mean Time to Repair (MTTR)

Prevention vs. Inspection

It is important to note the distinction between prevention, which keeps errors from happening, and inspection, which keeps errors out of the hands of the customer. Inspection is generally the domain of Quality Control, while prevention is concerned with improving the processes so that problems do not occur, thus it is Quality Assurance. But there is an overlap, particularly when Quality Control identifies issues with the end product and the systems and processes need to be updated.

Tolerance vs. Control Limits

A few definitions which should guide your world view in the area of quality:

- Tolerance is the range of acceptable results. If a result is out of tolerance, it must be rejected.

- Control limits are boundaries which represent acceptable variation for the purpose of controlling and manipulating the process. A result can be within acceptable tolerances, but signify a process which is out of control and/or requires additional development.

Attributes vs. Variables Sampling

When taking quality control measurements, the data can be collected based on the following two methods:

- Attributes sampling contains a single pass/fail criteria. The product either passes, or fails.

- Variables sampling contains a sliding scale criteria. The product is rated on a scale of 1-10, class A-C, or similar.

Planned vs. Actual Data

For some projects, the planned results should be identified and then actual results tracked side by side to ensure the conformance of the deliverables. This type of comparison helps tremendously in the identification of schedule and cost related to quality improvement.

The 7 Basic Quality Tools

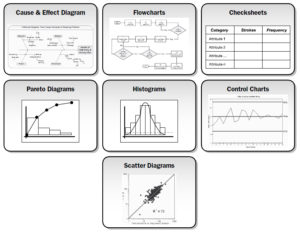

To solve quality-related problems, the project manager utilizes the seven basic quality tools, also known as 7QC:

To solve quality-related problems, the project manager utilizes the seven basic quality tools, also known as 7QC:

- Cause-and-effect diagrams, also known as fishbone diagrams and Ishikawa diagrams, place a problem statement at the center and attempt to trace the source back to its actionable root cause.

- Flowcharts illustrate a process and its various steps to break it down and further analyze each piece.

- Checksheets organize facts in a manner that will facilitate the effective collection of useful data about a potential quality problem.

- Pareto diagrams are excellent for isolating the one or two issues which are causing the lion’s share of the quality problems.

- Histograms are a special form of bar chart which illustrate the central tendency, dispersion, and shape of a statistical distribution.

- Control charts compare the data with the upper and lower specification limits to determine whether or not a process is stable.

- Scatter diagrams, also called correlation charts, compare the change in an independent variable, Y, to a dependent variable, X.

Project Changes

In project management theory, the identification of quality control problems must result in project changes. These must be documented in the project management plan, which requires updating and approval by the relevant authorities. If the project schedule, cost, communication needs, or any other item must change, these must be documented.

Leave a Reply